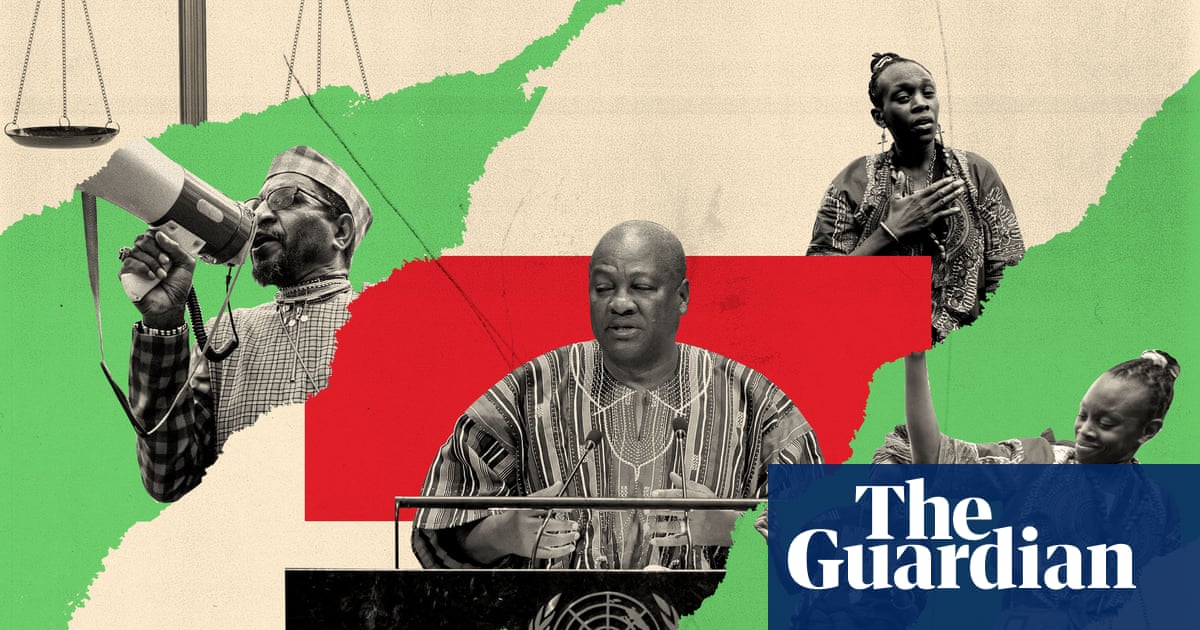

Human rights organisations, academics and writers have called on Ofcom to clarify what the high court ruling that the ban on Palestine Action was unlawful will mean for online platforms pending the home secretary’s appeal against the judgment.

The Metropolitan police have said that officers will no longer arrest people at protests who express support for the direct action group. But the signatories of a letter to Ofcom say it is unclear what it will mean for platforms which have duties to remove terrorist content under the Online Safety Act.

Open Rights Group, Amnesty International UK, Big Brother Watch, Access Now and others have asked the communications regulator to clarify whether platforms are still expected to remove content. They also want to know how new duties to remove terrorist content will be implemented and whether content can be restored if the government loses its appeal.

Sara Chitseko, the pre-crime programme manager at Open Rights Group, said: “The UK’s vague definition of terrorism and legal duties under the Online Safety Act already risk content being wrongly defined as illegal and removed. Now there is additional confusion over whether tech companies are targeting and removing online content relating to Palestine Action.

“In light of the court’s judgment and commentary on freedom of expression, Ofcom need to provide immediate guidance to ensure that important public debates about Palestine are not being censored.”

Last week, judges decided that the proscription order banning Palestine Action under anti-terrorism laws would remain in place pending Shabana Mahmood’s appeal against the high court’s decision. It means the legal position remains that content supportive of Palestine Action must be removed, when a platform finds it or it is reported to them.

But the signatories to the letter, who also include Statewatch, Netpol, Article 19 and the forensic computer expert Duncan Campbell, urge Ofcom to follow in the footsteps of the Met by clarifying the situation pending the appeal. They say it will become an even more urgent issue if new requirements to proactively scan for illegal content, restrict livestreaming and suppress algorithms come into effect later this year as expected.

The letter says the proscription of Palestine Action “raised serious concerns about the criminalisation of political expression” and there had been an escalation in content removals across platforms such as Instagram, TikTok and X, including the use of algorithms to hide Palestine solidarity posts, and cases in which people have faced police action for expressing political views online.

It adds: “The high court ruling should be a turning point. It demonstrates how easily counter-terror powers and platform regulation can be used to silence debate and suppress dissent and how difficult it is to undo those harms once systems of censorship and surveillance are put in place.”

When contacted by the Guardian, Ofcom did not directly address the situation pending the appeal. “Under the Online Safety Act, tech firms must take down illegal terrorist content swiftly when they become aware of it,” a spokesperson said. “There’s no requirement on sites and apps to restrict legal content for adult users. In fact, in carrying out their duties to keep people safe, the act requires platforms to have particular regard to the importance of protecting users’ right to freedom of expression.”

.png) 1 month ago

51

1 month ago

51