Online content creators are not just building fake images and videos of prominent public figures, they are also fabricating people and using them in military contexts, which can make them money and even serve as effective propaganda, according to artificial intelligence researchers.

Some of these online avatars are sexualized images of women wearing camouflage garb that have generated a significant audience and helped create an idealized image of political figures like Donald Trump, even if the viewer knows the content is not real, according to experts.

“We are blending the lines between political cartoons and reality,” said Daniel Schiff, an assistant professor of technology policy at Purdue University and co-director of the Governance and Responsible AI Lab (Grail). “A lot of people feel like these images or videos or the stories they convey, feel true.”

The amount of political deepfakes has increased dramatically in recent years, according to a Grail database. Since the start of 2025, the organization has catalogued more than 1,000 English language social media posts featuring fake images or videos of prominent political figures and politically important social issues and events.

In the previous eight years combined, the organization recorded 1,344 such incidents.

That uptick is largely because generative AI technology has improved, which has allowed people to quickly create such content, Schiff said.

We have made it “trivially easy to generate a scene that looks pretty realistic and to place real individuals into scenes”, said Sam Gregory, executive director of Witness, an organization dedicated to human rights and combating deceptive AI.

But the fake avatars – which mimic real ordinary people rather than known figures – are a different matter again.

AI women in uniform, Trump and ... feet photos?

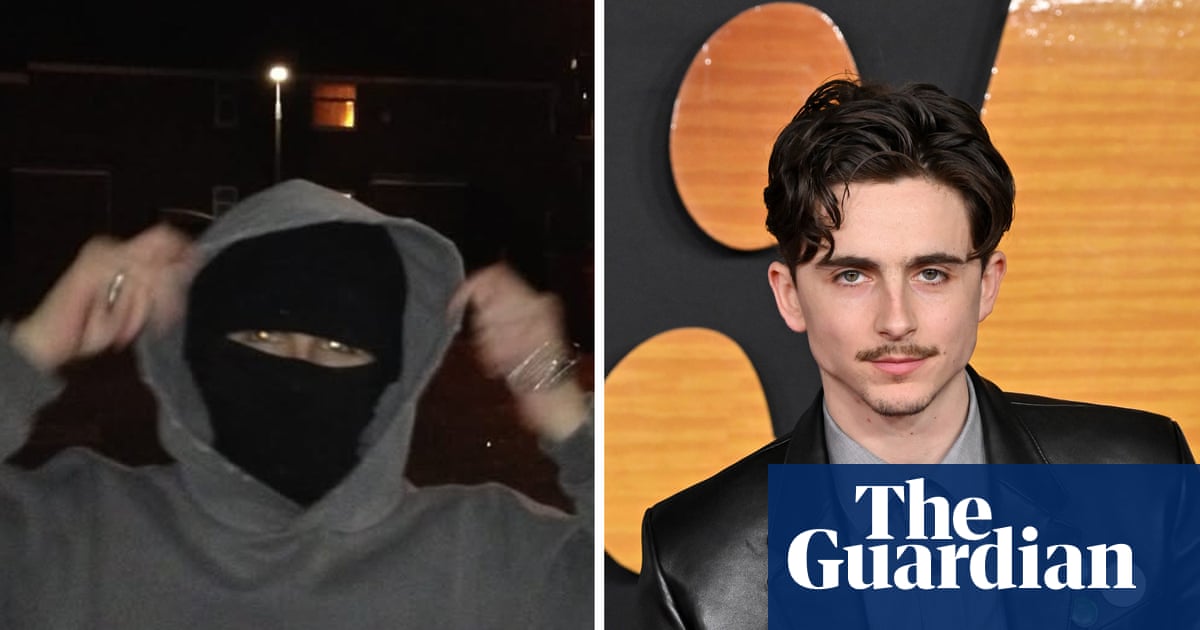

In December 2025, an account for Jessica Foster, an AI-generated blond woman often in US military uniform, went live on Instagram and started sharing photos of Foster atop a bunk bed in barracks; sitting in an office chair with her feet on a desk; and walking a tarmac in high heels beside Trump, according to Fast Company.

The creators intentionally used that footwear and had her feet appear prominently.

The images of Foster, who is not an actual person, drew more than 1 million followers on Instagram. The posts were then linked to an account on OnlyFans, a platform largely used by pornography creators, where visitors could buy foot photos supposedly from Foster.

“Why do you NEVER reply?” a user asked the Foster profile on Instagram, according to the Washington Post.

The account has been removed in recent days.

“A lot of the AI-generation is to basically get clicks and money or to drive people to a more lucrative place,” Gregory said.

But such tools can also serve a political purpose. During the war in Iran, a flood of videos have appeared on social media featuring fake female Iranian soldiers who say: “Habibi, come to Iran,” the BBC reported.

One of the giveaways was that Iran prohibits women from serving in combat roles.

Creators also built an AI-generated female police officer that has more than 26,000 followers on TikTok. A video features it smiling with the text: “President Trump deported over 2.5 million people out of the country. Is this what you voted for? Yes.”

It got more than 200 likes and 23 comments, including: “absolutely yes.”

During the 2024 election, Trump also shared AI-generated images that depicted Taylor Swift fans supporting him. Since 2024, Trump and the White House have shared at least 18 deepfakes on social media, according to the Grail database.

But the issue is not limited to the right. California governor Gavin Newsom, who many predict will run for president in 2028, has also started sharing deepfakes aimed at Trump, including one that shows the president smiling at a hologram of Jeffrey Epstein.

The AI researchers said political deepfakes can still be persuasive even if consumers know they aren’t real.

Foster is “walking in high heels, in a military uniform, her military badge is completely wrong. There is no reason she would be hanging out with President Trump and Nicolás Maduro”, Gregory said. “None of this, if you think about it, makes much sense or bears up to scrutiny. But people aren’t necessarily looking for things that are real; they are looking for things that represent their beliefs.”

The deepfakes then make it less likely that people will reconsider those beliefs, said Valerie Wirtschafter, a Brookings Institution fellow in its artificial intelligence and emerging technology initiative.

The deepfakes are “just another layer added on in terms of this process of reinforcing, rather than revisiting, what people believe is true”, said Wirtschafter.

‘It’s sort of like a troll farm’

The researchers worry that things will only get worse. The technology used to build Foster could also be used to produce what researchers described as “AI swarms”, capable of “coordinating autonomously, infiltrating communities, and fabricating consensus efficiently”, according to a recent study in Science.

“It’s sort of like a troll farm without actually having to have people any more,” Wirtschafter said.

But humans can still stop malicious actors from using AI to destabilize society, the researchers said.

The Coalition for Content Provenance and Authenticity has developed a “technical standard for publishers, creators and consumers to establish the origin and edits of digital content”, according to the group.

It’s “embedded in a photo you take on a camera or piece of content created with an AI tool or edited with an AI tool, and then distributed on a platform, so it’s meant to be a set of cryptographically signed metadata”, Gregory said.

The technology companies then need to use that information to label whether the content included AI, Gregory said.

LinkedIn, Pinterest, TikTok and YouTube have all committed to labelling AI-generated content. But an investigator with the Indicator, a media outlet, recently posted 200 AI-generated images and videos on those platforms to determine if they actually marked them. He found that the most diligent ones – LinkedIn and Pinterest – still only labelled 67% of that content; Instagram labelled just 15 of 105 fake images.

Meta’s oversight board recently stated that it was concerned by reports that the company was “inconsistently implementing” the Coalition’s standards “even on content generated by its own AI tools, and that only a portion of such output receives proper labeling”.

Gregory said the inconsistent labelling is due to a “failure of political will at the senior levels” of the big tech companies.

“We don’t need to give up on the ability to discern what is real from synthetic,” he said. “But we do need to act fast.”

.png) 9 hours ago

8

9 hours ago

8